AWS DevOps Agent — Hands-on

Most incidents are not complex.

Something breaks and someone has to figure out what changed. You open CloudWatch, check recent deployments, ask whoever last touched the

service. Two hours later, you find a renamed DynamoDB field in last week's commit. The fix? Five minutes.

AWS thinks they have a solution for that.

It's called AWS DevOps Agent — an "always-on, autonomous on-call engineer" that starts investigating the moment an

alarm fires. Built on Bedrock AgentCore, generally available since March 2026.

What it actually is

Connect it to your observability stack, code repos, deployment pipelines, and runbooks. When something breaks, it correlates data across

all of them to find what changed.

Three things it does:

-

Incident investigation. Alarm fires, agent pulls logs, checks deployments, diffs commits, tells you what changed.

-

Proactive recommendations. Between incidents it analyzes patterns and flags recurring issues before they blow up

again.

-

On-demand questions. "Why did checkout latency spike last Tuesday?" — it queries your tools and answers.

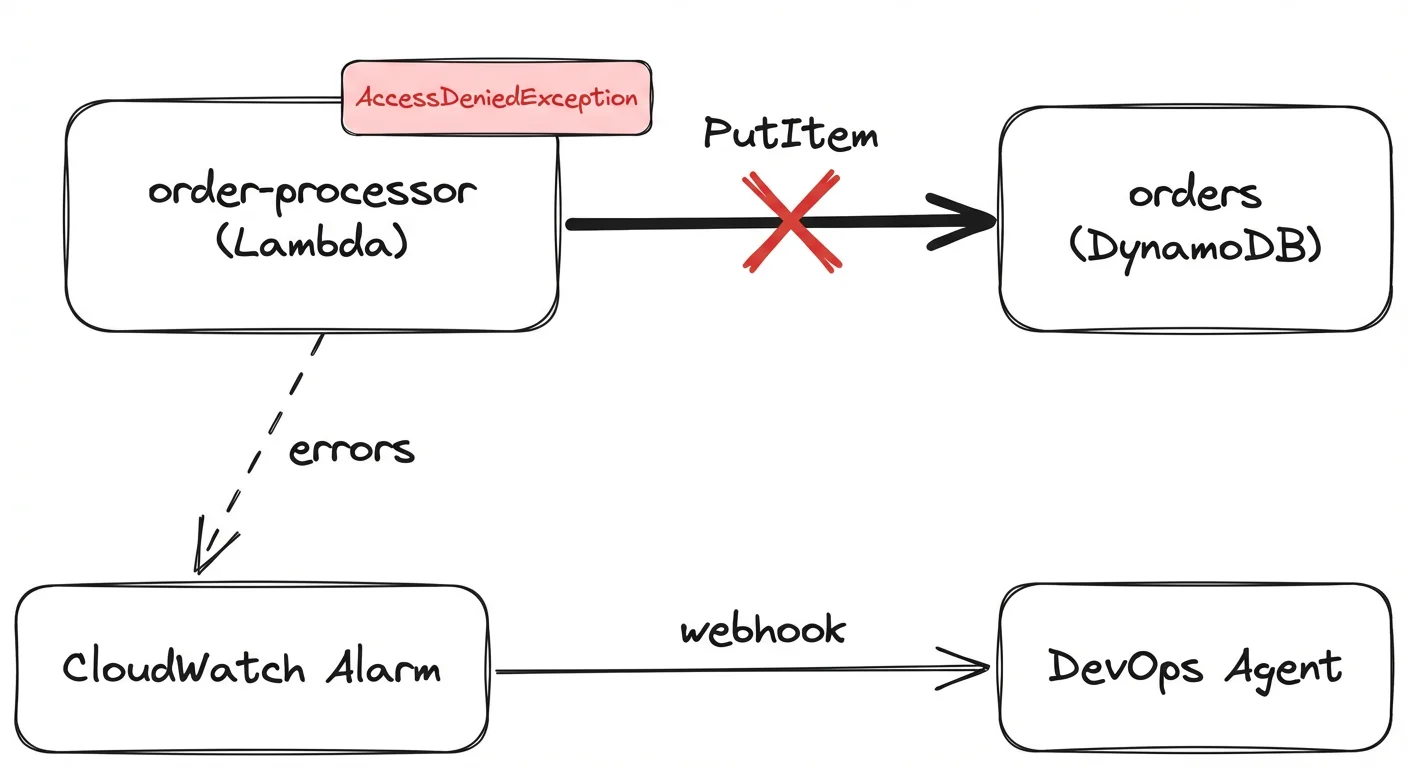

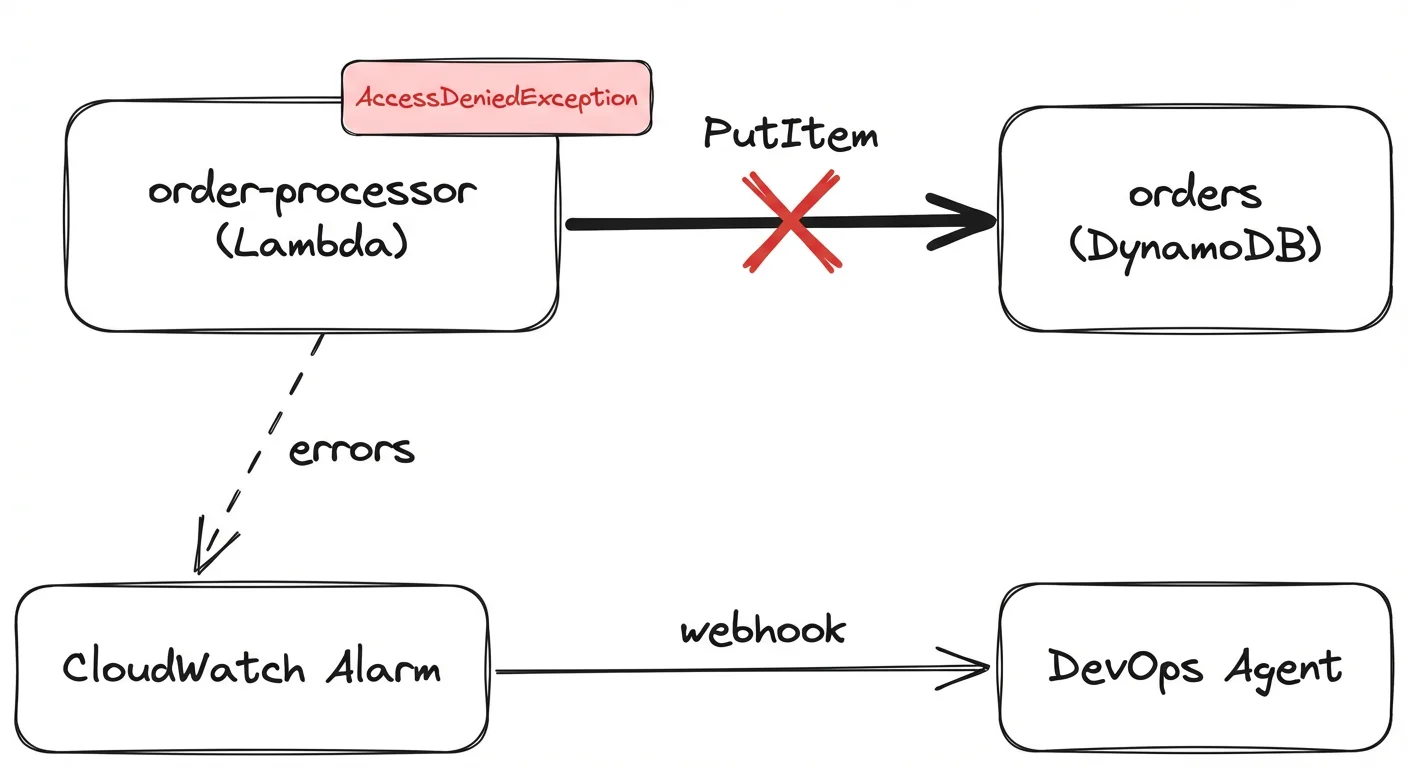

Test 2 — the missing IAM permission

An order processor Lambda calls dynamodb:PutItem, but the IAM

role is missing the permission. Classic code-review miss.

The moment the alarm fired, the agent posted to Slack with this:

Root cause: Terraform deployment by tobias.schmidt created Lambda function with incomplete IAM permissions. The

Terraform configuration is missing the required DynamoDB permissions for the role despite the function's code calling

dynamodb:PutItem on table

devops-agent-orders.

Not vague. It identified the exact deployment, the exact missing permission, and traced it through CloudTrail timestamps. No human dug

through logs.

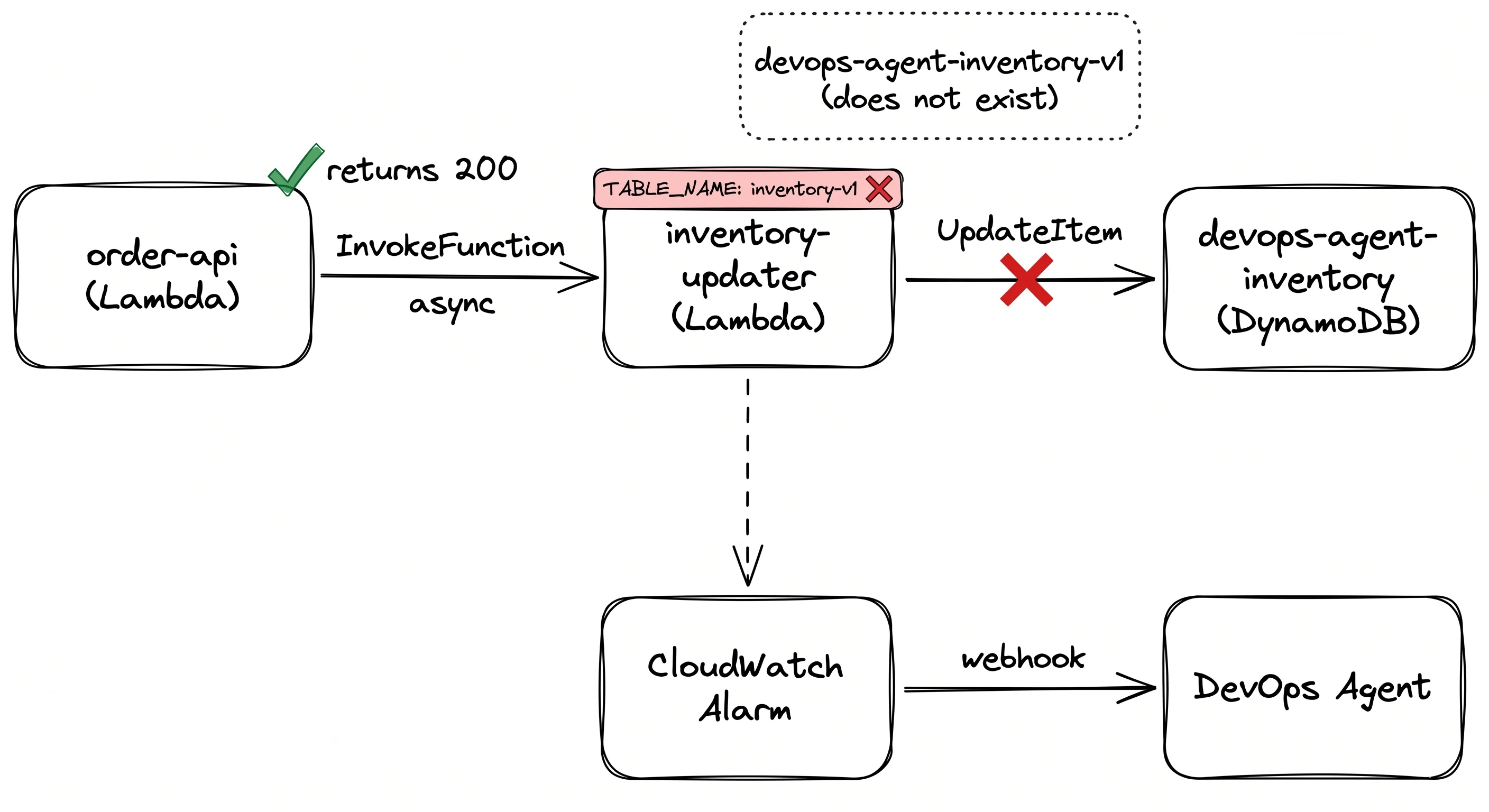

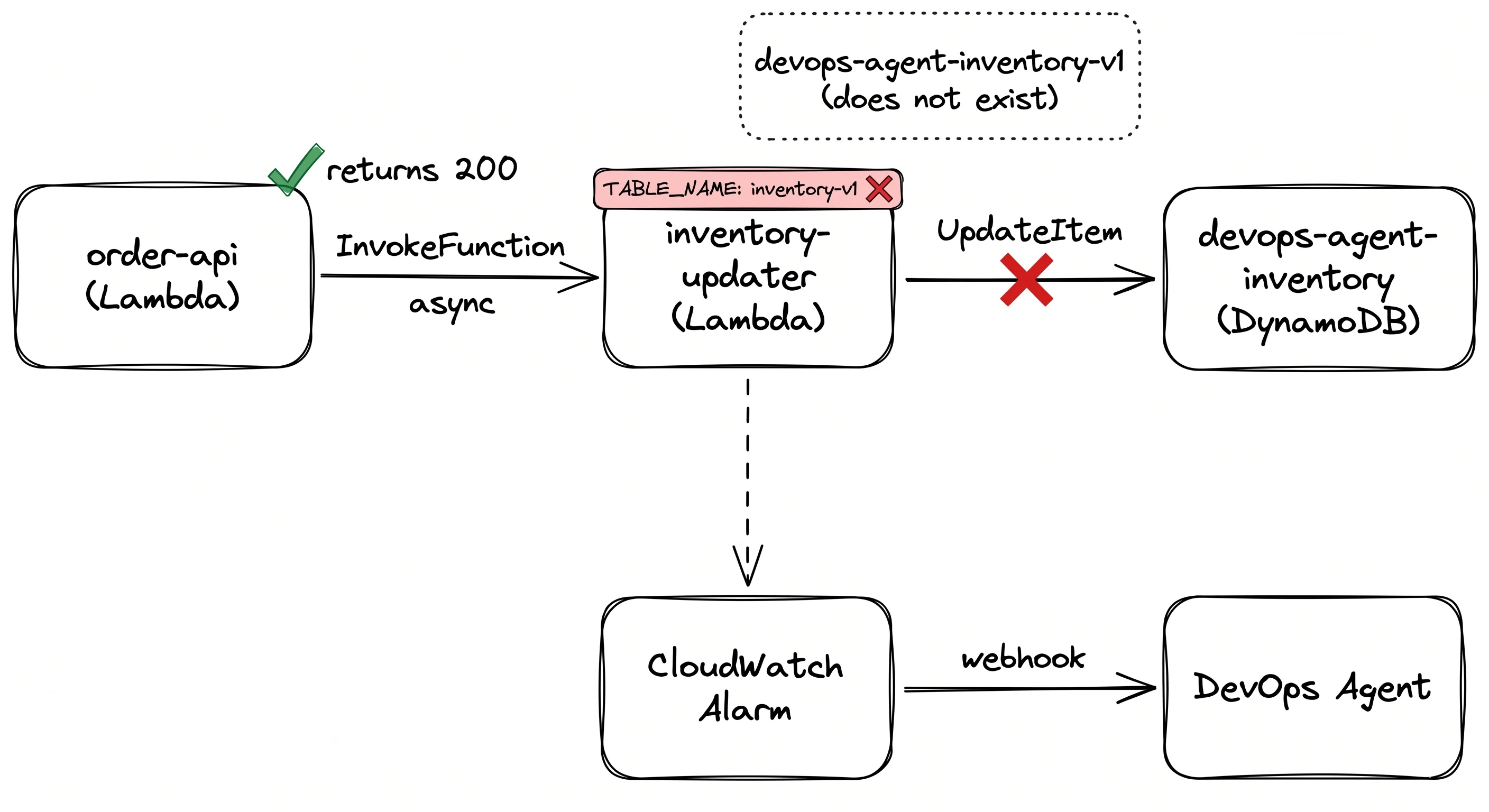

Test 3 — where it picked the wrong side to blame

Lambda env var: TABLE_NAME=devops-agent-inventory-v1. IAM

policy: grants UpdateItem on

table/devops-agent-inventory (no

-v1). Every invocation:

AccessDeniedException.

The agent traced everything correctly — env var, IAM, the exact Terraform commit. Then concluded: fix the IAM policy. Point it

at table/devops-agent-inventory-v1.

That fix would resolve the AccessDeniedException and

immediately surface a ResourceNotFoundException, because that

table doesn't exist.

The actual bug is the env var. TABLE_NAME should be

devops-agent-inventory. The IAM policy is correct.

So: it surfaces evidence brilliantly. It does not always reason about which piece of evidence is the actual root cause.

Where it falls short

-

No native CloudWatch integration. No alarm action targets DevOps Agent directly. You build a bridge Lambda, sign a

payload with HMAC-SHA256, POST to a webhook. Not the one-click setup the product page implies.

-

Pricing is hard to predict. $0.0083/agent-second across all task types. Roughly $30/hour of active time. No cost

caps.

-

Azure support is shallow. Cross-cloud is real but early. CloudTrail has no equivalent on the Azure side.

Worth trying?

If your team spends hours per incident manually correlating logs, deployments, and CloudTrail — start the 2-month free trial. Setup

takes an afternoon and after that it runs without maintenance.

The DevOps SRE fear is real but premature. It's a good investigator, not a replacement for someone who understands the system. Test 3

proved that.